A Note from Our Family Decision Editor: This article addresses serious financial and cognitive safety risks. If you suspect a parent has been targeted or victimized by an AI voice cloning scam, contact their bank's fraud team immediately, file a report at ic3.gov and reportfraud.ftc.gov, and call the DOJ National Elder Fraud Hotline at 833-372-8311. If the incident points to changes in your parent's judgment or memory, follow up with their primary care physician or a geriatric specialist for evaluation. The information here is educational and isn't a substitute for legal, financial, or medical guidance specific to your family's situation.

Last spring, a 75-year-old father in Ohio answered a call from his granddaughter, who was sobbing about a car accident, a county jail, and a lawyer who needed bail wired in the next hour. The voice was hers, the crying was hers, even the cadence of how she said his name was hers. He drove to his bank, wired $8,000 to the account the "lawyer" provided, and went home shaken but relieved. That evening his real granddaughter, at work in another state, called to check in about a recipe and had no idea what he was talking about.

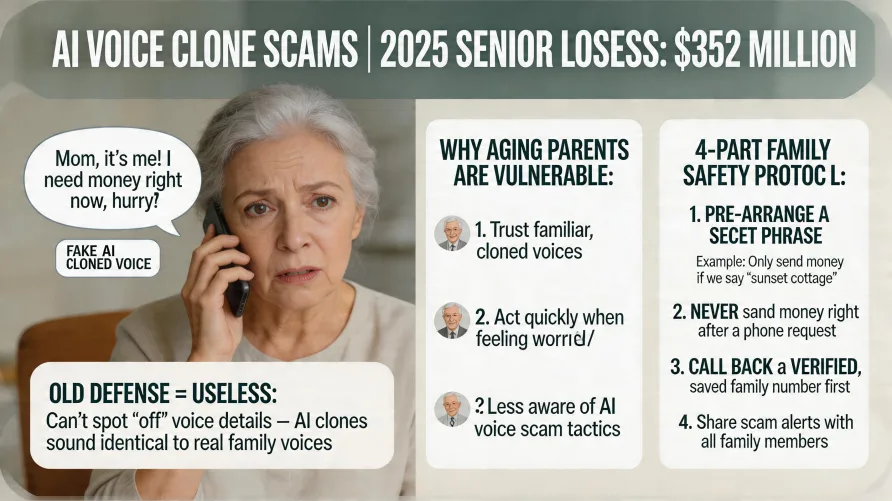

That caller was an AI voice clone, built from less than a minute of audio scraped from a social media post the granddaughter had made months earlier, and the money was gone before her grandfather understood what had happened. As of April 2026, this category of fraud has moved from rare to routine. The FBI's 2025 Internet Crime Report, released this month, shows AI-enabled scams now account for nearly $893 million in reported losses, with seniors absorbing $352 million of that total. Voice cloning is one of the fastest-growing tactics in the category, and families who haven't had a specific conversation with an aging parent about it are the most exposed.

When my family went through a relative's dementia journey, we learned something the textbooks don't emphasize enough: judgment often declines before memory does. That's the faculty AI voice cloning scammers exploit. I've spent nearly 20 years in hospitals watching how older adults respond under stress, and the combination of age-related vulnerability and cloned familiar voices is harder to defend against than most families realize.

How AI Voice Cloning Scams Target Seniors

The mechanism is simpler than most families assume. A scammer needs only 3 to 30 seconds of recorded audio of a person's voice, fed into a voice cloning tool that's now free or available for a few dollars a month. The tool generates a synthetic version of that voice that can speak any text the scammer types, and the scammer then calls a target, usually a grandparent or older parent, in the cloned voice of a distressed family member, running a fake-emergency script designed to extract money before the target has time to think clearly.

The audio doesn't have to come from a private source. A TikTok video, a podcast appearance, a voicemail greeting, or a graduation speech posted to Facebook all work, which means anyone with a voice online is potentially clonable. The fake-emergency genre has settled into a few recurring scripts. The most common is the arrest call, where a "grandchild" claims to have been in an accident and pleads for bail money while begging the senior not to tell mom and dad. A second caller, posing as the lawyer or bail bondsman, then provides wire instructions and pressure to act before a fictional court deadline. Variations include the medical emergency and the impersonation of an authority figure such as a Medicare officer or federal agent.

The thread connecting every script is engineered urgency: each one forces a decision in minutes, demands secrecy from other family members, and routes money through wire transfers, gift cards, or cryptocurrency, all of which are nearly impossible to claw back once sent. Most families assume they would recognize a fake, and that assumption is what scammers count on, but the technology has already invalidated it. From my own time around hospital intake areas, I can tell you the difference between a calm decision and an adrenaline-fueled one is enormous, and that's the gap these scams are designed to open.

The Scale of AI Voice Cloning Fraud and Why Seniors Are Hit Hardest

The FBI's Internet Crime Complaint Center broke out artificial intelligence as a tracked descriptor for the first time in its 2025 annual report, and the numbers are sobering. IC3 received more than 22,000 complaints with an AI-related nexus, with adjusted losses exceeding $893 million. Seniors filed 3,100 of those complaints and reported losses exceeding $352 million. Voice cloning specifically appears as a distinct subcategory, with victims claiming losses of more than $5 million in 2025 tied to "distress scams," in which voice cloning is used to mimic a loved one in trouble. That figure almost certainly understates the real toll, since most voice cloning victims never file a report.

Senior cybercrime losses overall reached an inflection point last year. Adults aged 60 and older filed 201,266 complaints with reported losses of $7.748 billion, a 59% increase from 2024. The average loss for this age group was $38,500, nearly double the overall average, and 12,444 seniors each lost more than $100,000. The financial toll on aging Americans isn't a side issue anymore.

For decades, the standard family advice for grandparent scams was simple: listen to the voice, and if something sounds off, hang up. That defense doesn't work anymore. The voice clone passes the recognition test because it sounds exactly like the relative, down to the cadence, the breath, and the way they say a particular name. Older adults who would have caught a generic impersonator are catastrophically vulnerable to a clone of someone they love. The old defense isn't just outdated, it can backfire, because a parent who concludes "yes, that's my grandson" then commits more confidently to the panic that follows. Most families don't know the new threshold.

Why Cognitive Decline Creates Specific Risk

The piece of this story that gets too little attention is what happens to judgment as we age, even before any clinical diagnosis is in play. Decision-making under stress is among the first faculties to weaken, and research on aging and financial vulnerability has found that more rapid cognitive decline predicts poorer financial decision-making and greater susceptibility to scams, even in older adults considered cognitively healthy. For those in early mild cognitive impairment, the risk multiplies. Voice cloning scams compress time and amplify emotion, which makes them almost custom-built to exploit this exact vulnerability. Early signs of cognitive decline often show up in financial behavior long before they show up anywhere else.

This is the section where I'll speak personally for a moment, because I lived through what I'm describing. When our family member's cognitive decline accelerated, the judgment changes came first, and the memory was still mostly intact. What slipped was the ability to weigh options under stress, to question something that didn't sound right, to pause before committing to a decision. The financial scares followed: an impulsive purchase here, a too-trusting phone call there. By the time we recognized the pattern, we'd already been through several frightening weeks of decisions we wouldn't have made a year earlier. If a voice cloning scam had hit during that window, I'm not confident we would have stopped it in time. That's the experience that drives how seriously I take this category of fraud, and why I push families to act before they think they need to.

The hardest part is that a parent who seems "fine" most of the time can still be acutely vulnerable in the specific moment a scam call lands; the voice clone exploits the moment, not the baseline. Some forms of dementia, including frontotemporal dementia, hit judgment hard while leaving memory relatively spared, which means the parent on the phone can seem completely themselves until they have to weigh a decision under pressure. From mobile X-ray work in care facilities over the years, I've watched how often a person's worst moments aren't predicted by their best ones, and that gap is where these calls do their damage.

The Family Protocol That Actually Stops AI Voice Cloning Scams

Defending against AI voice cloning takes four interlocking pieces. None of them works alone, but together they shut down nearly every variant of this scam.

1. A Family Code Word or Verification Question

Choose a word or short question that real family members know and a scammer cannot. Not a pet's name, a street address, or anything findable on social media or in public records. Something arbitrary works best: a made-up word, an inside joke, or a question with an answer only family would know, like "what did Aunt Sue spill on the cake at Thanksgiving." The instruction to your parent is firm: if a caller claiming to be a family member can't produce the code word, the call ends, even if the voice is right and the story is plausible.

2. Mandatory Callback to a Known Number

Before any money moves, your parent calls back the supposed family member directly, using a phone number they already have saved rather than a number provided during the suspicious call. The FTC's specific guidance is to call the person who supposedly contacted you and verify the story using a phone number you know is theirs. If the relative doesn't pick up, your parent calls another family member to physically locate them. The callback isn't optional, and it happens before the wire, before the bank visit, before anything irreversible.

3. Bank-Level Protections

Most banks now offer fraud-protection tools specifically for older account holders, but they only work if you set them up in advance. The most important is a trusted contact designation, which the CFPB has formally recommended for years. A trusted contact gives the bank someone to call when they suspect elder financial exploitation, without giving that person any control over the account. Other tools include daily wire limits, holds on unusual transactions, and account alerts that text family when large transfers are initiated. Sit with your parent, call the bank together, and ask what's available. If you don't already have financial power of attorney in place, this is the conversation to start that process.

4. The Conversation That Has to Happen This Weekend

The fourth piece is the one most families skip. Sit down with your parent and explain how voice cloning works, showing them an example online of how realistic it has become; demos on the FTC and AARP websites take only a minute. Walk through the scripts to expect, and rehearse what the two of you will do together if a call comes. The protocol matters less than the conversation itself, because a parent who knows the threat exists and has rehearsed the response is far less likely to act on impulse when the call comes at 11 PM on a Tuesday.

What to Do If It's Already Happened

If your parent has already wired money or sent crypto in response to a suspected voice clone scam, the next few hours matter more than the next few days. Move fast, in roughly this order. Call the bank's fraud line immediately and ask them to attempt to recall the wire. If the transfer is less than 72 hours old, the bank can request that the FBI initiate a Financial Fraud Kill Chain action, a coordinated freeze with the receiving bank that the IC3 Recovery Asset Team can use to claw money back. Most recovered funds are returned only when action happens within hours.

File a complaint at ic3.gov, and a parallel report at reportfraud.ftc.gov. Call the DOJ's National Elder Fraud Hotline at 833-372-8311, where case managers can help with reporting and recovery. File a local police report even if you don't expect it to lead anywhere, because you may need it for insurance or for the bank's records. If account information was disclosed during the call, freeze the account, change online banking passwords, and place a fraud alert with the three credit bureaus. Then watch the account closely, because scammers share victim lists and follow-up attempts are common.

When the immediate crisis is contained, have the harder conversation. A parent who fell for one of these scams may be signaling that judgment under stress is changing, which isn't a moral failure but a clinical signal worth following up on with a primary care physician or geriatric specialist.

The Repeat-Targeting Pattern to Watch For

One uncomfortable truth about voice cloning scams is that they rarely end after a single attempt. Scammers share lists of victims who have already paid, and follow-on calls are common within weeks of the first incident. The second wave often poses as a recovery service, a federal investigator, or a lawyer who claims to have located the original money and can return it for a fee. Seniors reported $540 million in losses to recovery scams in 2025 alone. The recovery scam exists because the original scam works, and victims who already lost money are emotionally primed to grab at any chance to get it back. If your parent has been targeted once, treat them as a higher-risk target indefinitely. And if the original incident revealed cognitive vulnerability that surprised the family, a geriatric assessment isn't an overreaction.

Closing the Window Before It Opens

Voice cloning scams are growing because they work. They exploit love, urgency, and the specific vulnerability of an aging brain trying to make a fast decision under emotional pressure. The defense, though, is well-defined and entirely within most families' control: a code word, a callback rule, a bank conversation, and a direct talk with your parent about what's out there now. Families who have this conversation before the call comes are the ones who don't end up filing reports at ic3.gov. The difference between those two outcomes is one weekend.

You don't have to figure out everything today. Pick the next concrete step: call your parent's bank about trusted contact designations, draft a code word, or schedule the conversation you've been putting off. Whatever it is, do it before the next quiet evening passes. The protection you put in place this week is the one that matters when the call actually comes.