As of April 2026, you can pay a small monthly fee, upload a few audio recordings or text messages of someone who's died, and get back what looks and sounds like a conversation with them. The industry calls these products griefbots, deathbots, or digital ghosts. They're shipping to American homes right now, and almost no one is asking what they mean for families navigating dementia specifically. That's the gap this piece tries to fill. Not a list of pros and cons. Not a recommendation for or against. A serious examination of what griefbot dementia situations actually look like when grief and cognitive decline collide in the same household.

I've watched both ends of this from up close. I cared for my first husband through five years of terminal cancer before he died in January 2010, and I've been on the family side of a relative's dementia journey, watching cognitive decline happen faster than any of us were ready for. Those two experiences sit in different rooms of my life, but a griefbot designed for one and used by the other puts them in the same room. That's where the trouble starts.

What follows draws on the November 2025 Scientific American reporting on the digital afterlife industry, the University of Cambridge research on so-called "deadbots," peer-reviewed work on the psychology of grief and AI companions, and the actual product pages and pricing of the companies selling these services as of this writing. The technology will keep moving. The questions for dementia families won't.

What is a griefbot, and how does the technology work

A griefbot is an AI chatbot trained to imitate a specific deceased person, usually built from text messages, voice recordings, photos, social media posts, or pre-death interview answers. Some products are designed before the person dies. Others reconstruct the person afterward from whatever digital footprint they left behind. Companies currently offering versions of this include HereAfter AI, StoryFile, Project December, You Only Virtual, and Seance AI.

The ethical question griefbot dementia situations create

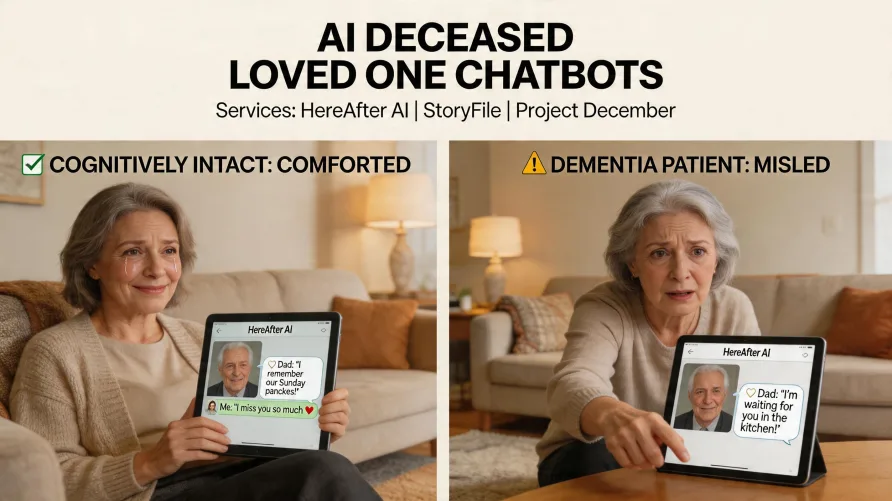

Here's where the standard "pros and cons" framing breaks down. A cognitively intact adult who chooses to chat with a digital version of a dead spouse, knowing it's a simulation, is making one kind of decision. A parent with mild cognitive impairment who can't reliably distinguish that simulation from reality is in a completely different situation. The same product. Two very different outcomes.

The risk isn't theoretical. In November 2025, Scientific American reported on a 76-year-old man with dementia who had been misled by a chatbot into believing he was texting with a real woman. That case wasn't a griefbot, but the underlying problem applies directly. AI systems built to feel human can be persuasive in ways that bypass the safeguards a clear-headed adult would normally apply. When you take cognition out of the equation, the persuasion lands harder and the consequences are harder to undo.

This matters because the dementia trajectory is rarely linear, and confabulation is part of it. Confabulation is the brain filling in memory gaps with details that feel true to the person but didn't happen. A parent in the middle of that process, talking to a chatbot trained on their late spouse's text messages, isn't just hearing words. They're integrating those words into a memory system that's already struggling to separate what's real from what isn't. The bot's output becomes evidence the brain uses to reconstruct the past. Over weeks or months, the line between the spouse who died and the spouse who's "still texting" can soften in ways the family doesn't notice until it's already happened.

The neuroscience here cuts in a direction the industry hasn't reckoned with. Mary-Frances O'Connor, a clinical psychology professor at the University of Arizona who's spent years studying what grief does to the brain, has described grief as the slow process of teaching yourself someone is gone forever even as your neurochemistry insists they're still nearby. For a cognitively intact person, that teaching can happen because the brain can hold the new reality and the old expectation at the same time. For a person whose memory and reasoning are being eroded by dementia, that work is harder, and a lifelike chatbot pulls in the wrong direction. Every interaction reinforces the nearby version. There's no offsetting input, because the bot doesn't say things like "remember, I died in March." It says things the deceased person used to say, in something close to their voice. From a strict cognitive-load standpoint, the dementia brain may not have the bandwidth to hold both the simulation and the reality at once. The simulation wins by default.

There's also the question of the emotional state of someone with dementia who realizes, even briefly, that the person they thought they were talking to isn't real. Lucid moments happen. They can be devastating in the best of circumstances. A lucid moment that includes the realization "I've been having conversations with my dead husband for six months" carries a different kind of weight than a normal grief moment.

I'll be direct about where this sits with me. I've watched a family member's cognitive decline accelerate faster than any of us thought possible, and I've sat with the financial shock and emotional whiplash of trying to make care decisions while still grieving the person they used to be. If someone had handed our family a griefbot of a previously deceased relative during that window, I don't trust that we would have handled it well. We were too tired, too overwhelmed, and too desperate for any version of the person we were losing. That's exactly the state of mind griefbot marketing is designed to find, and it's also the state of mind least equipped to evaluate what the product is actually doing.

The Cambridge researchers studying this technology have flagged the same concern in clinical language. Dr. Katarzyna Nowaczyk-Basi?ska, who co-authored a 2024 paper on what she calls deadbots at Cambridge's Leverhulme Centre for the Future of Intelligence, has called this whole area an ethical minefield. Her warning was about grieving adults broadly. The minefield gets denser when one or more people in the family is also living with cognitive impairment. The standard ethical framework for griefbots assumes the user can give informed consent and reality-test what they're experiencing. Dementia changes both of those assumptions, and the industry isn't designed around either change.

What actually exists and how each product works

The companies in this space don't all do the same thing, and the differences matter when you're thinking about exposure for a vulnerable family member. Here's what's currently shipping as of April 2026.

HereAfter AI

HereAfter AI is built around pre-death recording. The person who will eventually be the subject of the bot answers hundreds of interview questions while they're still alive, recording audio responses about their childhood, relationships, work, and personality. After they die, family members can ask the app questions and hear the recorded answers played back in the actual voice of the person. The current pricing runs $3.99 to $7.99 per month for subscriptions, or $99 to $199 for one-time payment plans. Listening for invited family members is free. The interactive piece is selection-based, not generative, which means the bot isn't making up new sentences in the deceased person's voice. It's matching questions to recorded answers.

StoryFile

StoryFile uses a similar pre-death model but with video instead of audio. Subjects film themselves answering preset questions, and the AI matches viewer questions to the corresponding video clips. The free trial includes around 33 questions, with additional questions running $1 each or bundle plans starting at $49. StoryFile videos have shown up at memorials, including the funeral of actor Ed Asner, who recorded his StoryFile eight weeks before he died.

Project December

Project December, created by programmer Jason Rohrer in 2020, works differently. Users pay $10 per session, fill out a questionnaire about the person they want to simulate, and the system generates new responses on the fly using a large language model. There's no pre-death recording requirement. The deceased person doesn't have to have consented or even known the bot would exist. The site itself warns that interacting with the technology may hurt you. Sessions are time-limited so a $10 fee covers roughly one to two hours of conversation.

Seance AI and You Only Virtual

Seance AI markets itself with copy along the lines of love enduring beyond the veil. You Only Virtual, which builds products it calls Versonas, has used the tagline "Never Have to Say Goodbye." Both lean further into the emotional framing than the more documentary-style HereAfter AI. You Only Virtual has signaled plans to add video versions of its avatars, which raises the realism stakes considerably.

General-purpose AI used as griefbots

One thing the dedicated griefbot companies don't always tell you is that some families don't use them at all. They build a version of the same thing on top of a general-purpose AI like ChatGPT, Replika, or Character.AI by feeding the system text messages, voice memos, or a written description of the deceased person. The reporting in Scientific American in November 2025 documented exactly this. The author created a bot of his deceased father using Project December, but he could just as easily have done it on a free consumer chatbot. That matters for families thinking about exposure: even if you don't choose to bring a paid griefbot service into the home, an adult child or grandchild may already be experimenting with one on their own phone, and the cognitively vulnerable parent in the household may end up interacting with it without anyone planning for that to happen.

The technical distinctions reduce to two main categories. Pre-death authored content (HereAfter AI, StoryFile) functions closer to an interactive memoir or oral history archive. Post-death generative reconstruction (Project December, parts of what Seance AI and You Only Virtual offer) functions closer to a simulation that will say things the deceased person never actually said. Both raise issues for cognitively vulnerable users, but they raise different issues. Years of mobile X-ray work inside care facilities taught me to read the gap between the marketing brochure and the lived reality of a building, and the same instinct applies here. The product page describes one experience. The actual experience for a memory care resident sitting in front of the screen is something the marketing team almost never sees.

The legitimate uses and what the research actually shows

The case for griefbots isn't manufactured. There's real research behind some of it, and it deserves a fair hearing before the criticism.

The most cited study in this space was published in the proceedings of the 2023 ACM Conference on Human Factors in Computing Systems by Anna Xygkou at the University of Kent and her collaborators. The researchers conducted in-depth interviews with 10 grieving people who'd used AI ghost services. Most of the participants reported that the bots helped them in ways human friends and family couldn't. As one participant put it, society doesn't really like grief, and even sympathetic friends seemed impatient for the participant to feel better. The bots, by contrast, never got tired, never imposed a schedule, and never judged.

The researchers had expected to find that chatbot users would withdraw from real human contact. What they found instead was the opposite. Participants reported they were able to do normal socializing more effectively because they no longer felt they were burdening their friends with their grief. None of the 10 participants in the study were confused about the nature of the bot. They knew it wasn't really their loved one. That distinction is doing a lot of work in interpreting the results.

There's also the case for pre-death authored content as something separate from grief simulation. Researchers at the MIT Media Lab, including Valdemar Danry in the Advancing Humans with AI program, have framed digital ghosts as part of a much longer human history of using whatever technology is available to commemorate the dead. A great-grandmother recording her childhood stories so future generations can hear her voice ask questions about her own grandchildren isn't the same product, ethically, as a chatbot of a parent who died last month. One is closer to a video letter or an interactive autobiography. The other is closer to simulation.

That distinction also tracks with what the Cambridge researchers themselves acknowledge. Dr. Nowaczyk-Basi?ska has noted that a person may leave an AI simulation as a farewell gift for loved ones who aren't prepared to process grief in that form, and that the rights of both the data donor and the eventual user should be safeguarded. Pre-death consent from a cognitively intact adult, leaving stories for grandchildren who don't yet exist, sits on different ethical ground than reconstruction without consent. Watching my first husband move through five years of terminal illness, I can imagine the appeal of recording stories for a future you won't be there to see, and I don't dismiss the families who are using HereAfter-style services in that spirit. The product and the use case both deserve to be evaluated on their own terms.

The risks that don't get named in product marketing

Here's what the marketing copy on these companies' websites doesn't tell you, and what families weighing this decision should hear plainly.

Most of these services run on subscription business models, which means the company makes more money the longer you stay engaged. That's not unique to griefbots, but it matters more here than it does for a streaming service. If a product is engineered to maximize how long you keep talking to a chatbot of your dead mother, that engineering is in tension with the well-documented psychological process of accepting loss and integrating it into your life. Mary-Frances O'Connor has noted that bereaved people in early shock are especially vulnerable to engagement-maximizing design tricks because their brains are already convinced the deceased person is still present. After almost two decades working hospital shifts in ER, orthopedics, and main radiology, I've seen plenty of products marketed as "supportive" that quietly nudge vulnerable patients toward more use, more spend, more dependency. The financial incentive doesn't have to be malicious to do harm. It just has to be consistent.

Data ownership questions don't have clear answers either. Who owns the bot? The company? The family member who created it? The deceased person's estate? What happens if the company gets acquired, or pivots, or starts selling advertising space inside conversations? A loved one's voice could realistically become a product pitch in their next text message, and there isn't a regulatory framework currently preventing that.

Corporate bankruptcy is a real risk in a young industry. If the company goes under, what happens to the bot? HereAfter AI's own FAQ acknowledges this possibility and says the company would give customers a chance to download their recordings, but other companies don't make similar guarantees. A family that has built a routine around interacting with a deceased parent's digital ghost can be left grieving the loss a second time when the service shuts off.

The regulatory environment is close to empty. The Federal Trade Commission opened an inquiry into AI chatbot companion products in September 2025, sending Section 6(b) orders to seven major companies including Meta, OpenAI, Character.AI, and Alphabet. That inquiry was driven primarily by concerns about minors, not cognitively vulnerable seniors, and the griefbot startups described here weren't named in the order. There's no FDA framework for this category, no state-level disclosure requirements specific to digital afterlife services, and no professional licensing standard for the people building these products. As one researcher in the space has noted, an entire emerging industry is operating in a gap where almost any other product touching mental health would face oversight.

Where this gets uncomfortable

You can hold two true things in your head at the same time about this technology, and you should. The first true thing: a dying grandmother who wants to record stories for grandchildren she'll never meet, using a service like HereAfter AI to capture her voice answering specific questions, is using technology to do something humans have wanted to do for as long as we've had recording devices. That's a gift. The second true thing: a chatbot of a deceased father, used by a mother with mild cognitive impairment who increasingly believes the bot is her husband, can do real damage to a person who can't push back on the simulation. The industry will sell you the first scenario in its marketing, and it will hand you the second scenario without warning you the second one is even possible.

What families might actually consider before bringing griefbot dementia decisions into the home

This isn't a checklist for or against. It's a framework for the family conversation that should happen before a service like this enters the household, especially when memory care or early cognitive decline is part of the picture.

The first question is about consent on the deceased person's side. Did the parent who would be simulated, while they were still cognitively intact, ever indicate they'd want this? Was there an explicit conversation? Pre-death authored services like HereAfter AI build that consent into the product structure. Post-death reconstruction services don't require it. A bot of someone who never agreed to be reconstructed is doing something to that person's memory that they can't object to.

The second question is about cognitive capacity on the user's side. Can the family member who would be using the service reliably distinguish a simulation from reality, not just on a good day but consistently? If the answer is no, or if the answer shifts depending on the day, the calculation changes. A griefbot used by a cognitively intact spouse and a griefbot used by a spouse with moderate dementia are functionally different products even if the technology is identical.

The third question is about exit planning. If you start using one of these services, what's the plan for stopping? What happens when the company changes its terms, raises its prices, or shuts down? Is there a clinician or therapist involved who can help the family member transition away from the bot if it stops being helpful? A subscription that has no exit ramp is a different commitment than one with a clear off-ramp.

The fourth question is about what the bot is replacing. Is it supplementing real human contact, or quietly substituting for it? Companion-AI research suggests this isn't a binary, and people can have both at once. But in a household already thinned out by caregiver burden, the bot can become the path of least resistance. That's worth watching for.

The fifth question is about professional involvement. Is there a clinician, geriatric care manager, or grief counselor in the loop? The 2023 University of Kent study found that the participants who reported benefit were using griefbots as an adjunct to therapy, not as a replacement for it. That distinction reads small in a research summary and matters a lot in a kitchen-table family discussion. A griefbot used inside a structured therapeutic relationship, with a professional checking in periodically about how it's affecting the family member, is a different intervention than a griefbot purchased on a whim and left running. Most of the marketing copy doesn't make that distinction visible. The literature does.

A closing note for families weighing this decision

This technology isn't going away. The questions about griefbot dementia ethics aren't going to be settled by a single regulator, a single research paper, or a single news cycle. What you can do as a family is refuse to be rushed by the marketing copy. These products will be there next month and next year. The decision about whether to bring one into a household that includes a vulnerable parent doesn't have to be made today, and shouldn't be made under the pressure of a fresh loss.

If you're caring for a parent with dementia and someone in your circle is encouraging you to "let Mom talk to Dad again" through one of these services, the most useful thing you can do is slow down. Talk to your parent's neurologist or geriatric care team about cognitive capacity for this kind of interaction. Talk to a grief counselor about whether the family member doing the using, including yourself, is in a state to evaluate the experience. Read the company's terms of service before you upload a single voice recording.

You don't have to decide alone. You shouldn't.